AI safety

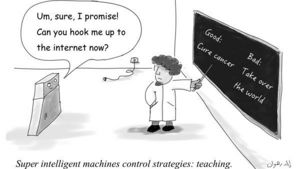

AI safety is a field of research used to describe a sequence of increasingly specific problems when developing Artificial intelligence and AGI. The goals center around reducing risks posed by AI, especially powerful AI and includes problems in misuse, robustness, reliability, security, privacy, etc. (Subsumes AI control.) AI control: ensuring that AI systems try to do the right thing, and in particular that they don’t competently pursue the wrong thing. Value alignment: understanding how to build AI systems that share human preferences/values, typically by learning them from humans.[1]

Dr. Roman V. Yampolskiy/Alex Klokus - What We Need To Know About A.I. - WGS 2018 править

World Government Summit. In 2013, His Highness Sheikh Mohammed bin Rashid Al Maktoum, Vice President and Prime Minister of the UAE and Ruler of Dubai, commissioned the establishment and launch of the World Government Summit, a global knowledge exchange platform for governments. The Summit was created to be on the cutting edge of technological advancements and keep abreast of the latest trends in government practice and innovation, through bringing together policymakers with business and civil society leaders. The World Government Summit ushers in a new era of responsibility and accountability where governments can better serve people.[2]

Robert Miles - Why Would AI Want to do Bad Things? Instrumental Convergence править

How can we predict that AGI with unknown goals would behave badly by default? March 2018[3]